Understanding the CPU profile of the TeamCityServer

Running TeamCity 5.1.5

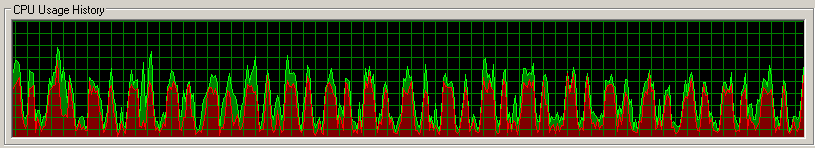

Using Sysinternals Process Explorer, we've been watching the CPU Usage History of the server process (java.exe), and we see periodic surges in CPU use. Trying to eye ball it, the cycle seems to be about 20 seconds long, with the CPU running hot for about half of that time.

Anybody know what that is, and whether or not it is normal?

From other evidence, especially looking at the thread properties, it appears that it might be the CustomDataStorageManager flush, but I can't imagine what requires so much flush on a regular schedule. My previous guess was handling the build log messages coming from the agents, but I'm still seeing a similar work pattern when most agents are idle.

Context: we've been having trouble lately with the server becoming unresponsive with the CPU pinned, but we don't have a baseline from our previously stable server to compare to. So I'm stuck trying to guess which bits of behavior are normal, and which are not. At the moment, my belief is that this is normal - but I'm not holding that belief very tightly.

Please sign in to leave a comment.

It currently looks like I'm catching a flush of the timestamps for the vcs roots. Which is to say that

[teamcity.data.path]/system/pluginData/customDataStorage/buildTypes/bt????/[teamcityPrevCallTimestamp_vcsTrigger] appears to be updated every 20 seconds or so.

If I'm translating correctly, what's happening is that every 20 seconds or so, each build configuration checks its vcs build trigger to determine if the vcs root has received an update. As part of that check, it updates the timestamp that is tracking when the trigger was last checked. This marks the property dirty, which means that the CustomDataStorageManager wants to update the property file, and it will do so when the iterator next reaches that property.

In other words, I'm seeing a cpu spike because I have 1150 build triggers updating their time stamps every 20 seconds or so, and it takes some work to get all of that written to the disk.

Edit: NVM.

You described it very well even without seeing the code. I am impressed! Yes, you're right custom data storage is updated periodically to store new state of triggers. But before fixing it it would be nice to know whether this is actually a problem. Do you experience server slowdown because of it?

It's clearly not the original problem, which was making the system completely unusable.

I suspect that it makes the web UI more sluggish than it should be, when your request happens to reach the server at an unlucky time. But that's purely speculative - it's not so bad that my users are demanding that I fix it, which puts limits on how much investigation I can invest.

Here's a picture, to give you a sense for the extent of the work being done.

I don't have a sense for what that curve would look like when the number of build triggers is more reasonable. At the moment, we're managing 110 active projects with 954 build configurations, and an additional 145 archived projects. That makes me guess that the 1150 build triggers are only coming from the active projects - I may be able to relieve some of the pressure by archiving more aggressively.

Answering your question another way - if you released a patch to address this in 5.1.5, we'd apply the patch, but we wouldn't be in a hurry to do it? Not with the current load and symptoms, anyway.

We've done a lot of performance optimizations in 6.0. So if you plan to upgrade, chances are that performance will be better.